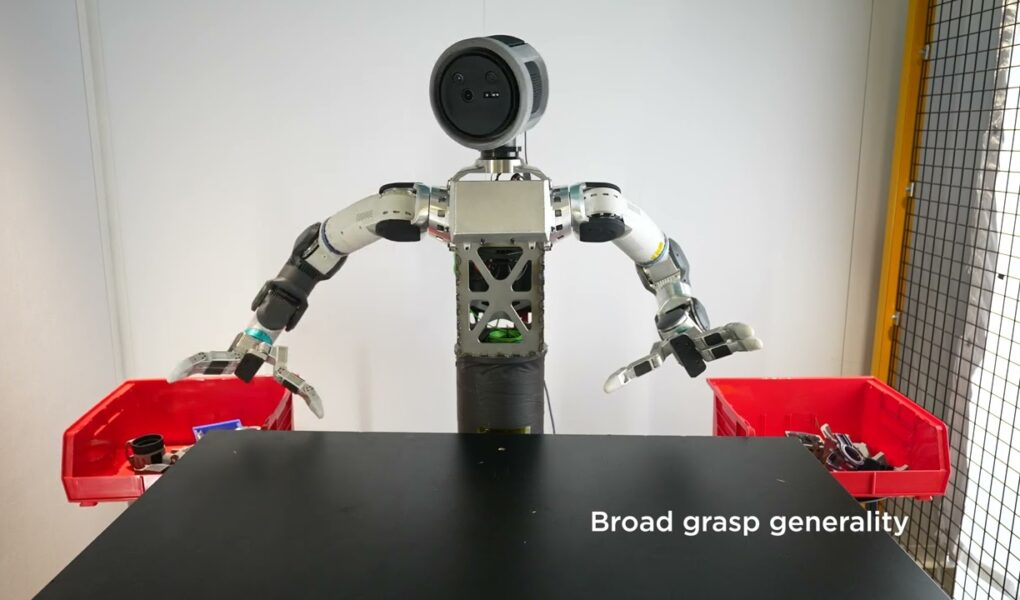

Boston Dynamics

In robotics, dexterity and perception go hand-in-hand, literally! Our Atlas manipulation test stand practices a range of grasps, using RL policies trained using NVIDIA’s DextrAH-RGB.

Learn More: https://developer.nvidia.com/blog/r%C2%B2d%C2%B2-adapting-dexterous-robots-with-nvidia-research-workflows-and-models/

Source

Now i'll just wait for a random chinese company to release their video about a much more advanced robot 😂

You have to add a penalty in the function cost to the movement of the object before the grab happens. It could help the robot with that rapid movements after the grab

Atlas should try to build a lego set next.

When will they be available for purchase?

Train them to be gentle on things instead of throwing them 😂

I'm going to be brutally honest: I'm not too worried about losing my job to this guy. When this guy is finally ready to compete, I've already been decomposing in the ground for a while.

Oh god we're all dead.

What's with the robot having to move its head?

Should have gotten 360 degree vision – no need to move head

Me putting my toys away at age 5

I liked seeing training with glass cups 😅

Sweet, so we have a plan to take care of all the workers this will displace, right?

r-right guys?

you guys are epic

Trying to mimic human anatomy in robots is idiotic. There is a reason robot vacuums do not look like a person with a vacuum cleaner and a welding robot in a car factory does not look like a guy with a welding tool. Humanoid robots are good only for hype and maybe a very niche field of prosthetics, but even there you can get much more effective manipulators if you stop trying to mimic human anatomy.

1:26 i must have watched this catch about 100x lmao impressive!!!

i love the sort of over animated motion, its cute

I think the most impressive thing here is that it retried to pick up that last object, which shows that the robot knows what a "bad grab" is

Why not design a movement system that the machine learning iterates on top of? It seems like you could save a lot of time, let alone make something much better than what this thing is doing.

we need a video of arm wrestling atlas

Are you by chance…a pleasure model?

When I use ChatGTP I always write "thank you"

Respectfully what's the point?

use smaller table, so it can handle objects that need to be grabbed without sliding/falling off table.

You forget you're just looking a computer with moving parts. Incredible.