For years, Mr. Musk, along with other pundits, philosophers and technologists, have warned that machines could spin outside our control and somehow learn malicious behavior their designers didn’t anticipate. At times, these warnings have seemed overblown, given that today’s autonomous car systems can even get tripped up by the most basic tasks, like recognizing a bike lane or a red light.

But researchers like Mr. Amodei are trying to get ahead of the risks. In some ways, what these scientists are doing is a bit like a parent teaching a child right from wrong.

Many specialists in the A.I. field believe a technique called reinforcement learning — a way for machines to learn specific tasks through extreme trial and error — could be a primary path to artificial intelligence. Researchers specify a particular reward the machine should strive for, and as it navigates a task at random, the machine keeps close track of what brings the reward and what doesn’t. When OpenAI trained its bot to play Coast Runners, the reward was more points.

This video game training has real-world implications.

If a machine can learn to navigate a racing game like Grand Theft Auto, researchers believe, it can learn to drive a real car. If it can learn to use a web browser and other common software apps, it can learn to understand natural language and maybe even carry on a conversation. At places like Google and the University of California, Berkeley, robots have already used the technique to learn simple tasks like picking things up or opening a door.

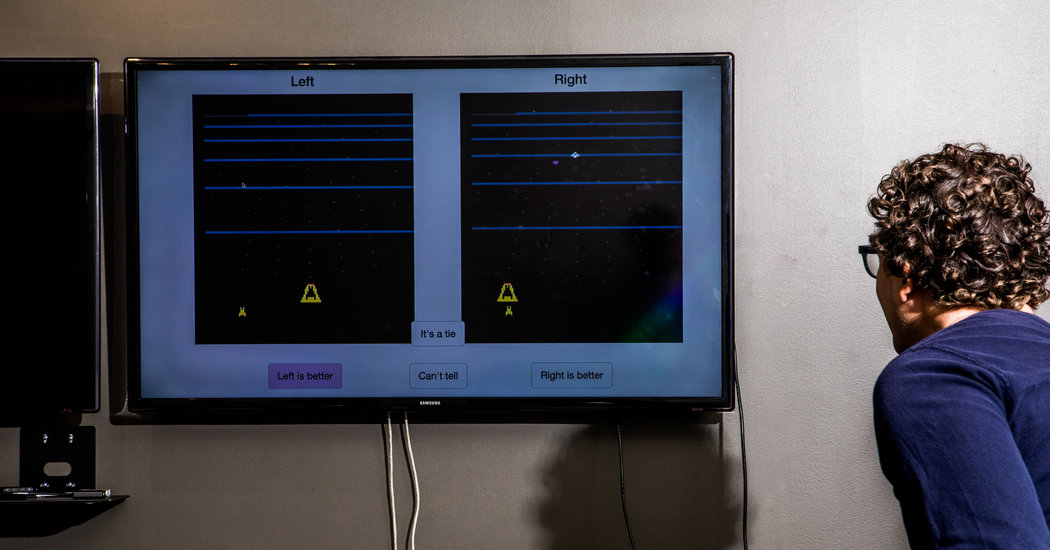

All this is why Mr. Amodei and Mr. Christiano are working to build reinforcement learning algorithms that accept human guidance along the way. This can ensure systems don’t stray from the task at hand.

Together with others at the London-based DeepMind, a lab owned by Google, the two OpenAI researchers recently published some of their research in this area. Spanning two of the world’s top A.I. labs — and two that hadn’t really worked together in the past — these algorithms are considered a notable step forward in A.I. safety research.

“This validates a lot of the previous thinking,” said Dylan Hadfield-Menell, a researcher at the University of California, Berkeley. “These types of algorithms hold a lot of promise over the next five to 10 years.”

The field is small, but it is growing. As OpenAI and DeepMind build teams dedicated to A.I. safety, so too is Google’s stateside lab, Google Brain. Meanwhile, researchers at universities like the U.C. Berkeley and Stanford University are working on similar problems, often in collaboration with the big corporate labs.

By CADE METZ

https://www.nytimes.com/2017/08/13/technology/artificial-intelligence-safety-training.html

Source link