Theo – t3․gg

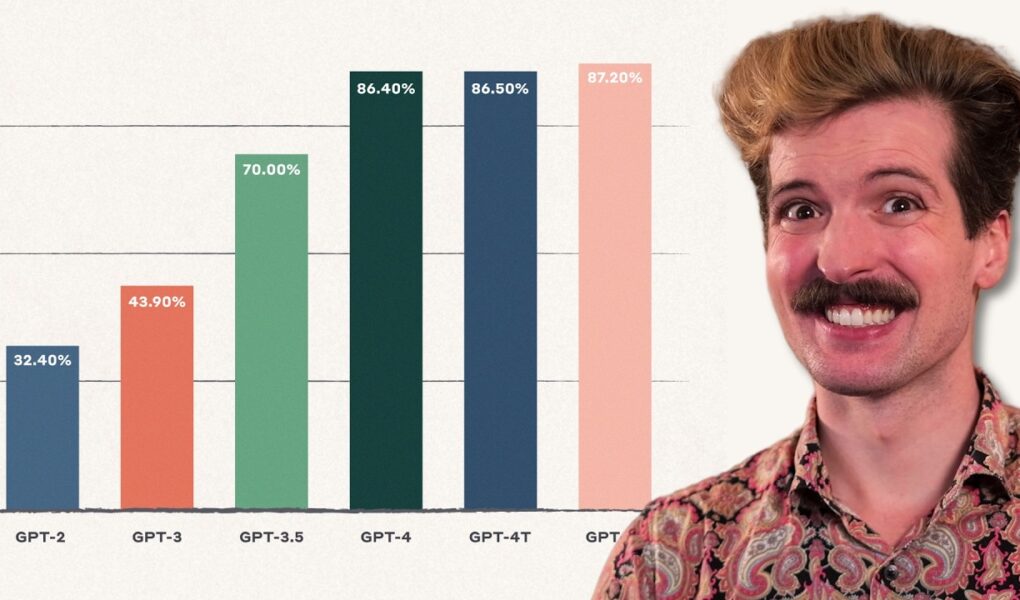

We’ve seen insane advancements from OpenAI, ChatGPT, Anthropic, and basically every other LLM provider. But it seems like those advancements have slowed. Is this the “moore’s law” of LLMs breaking down? Can we blame NVIDIA? Let’s talk about it

SOURCES

https://gist.github.com/t3dotgg/f88745964786d5153938366eb18b2db0

Check out my Twitch, Twitter, Discord more at https://t3.gg

S/O Ph4se0n3 for the awesome edit 🙏

Source

They've used all of the data. If they can't find a way to use it better that it, no more change.

The key for a better future is to ge rid of socialism, DEI, ADL, and other woke crap.

Why would we want to create an open source (I.e. free) learning and free thinking ai?

Moore's CPU S-curve

Fast inverse square root algo was NOT used in Doom. The screen shows one thing, the person says another. In the same way as the graph doesn't show slowdown in ai capabilities. It's still linear. It only shows that models get released more frequently.

At this point AI is as good as a junior developer. Not much longer until it's as good as senior. Then better than seniors. That's how human progress goes

This aged well.

😀

Well this aged well…

Heh

He says this and then we get o3 beating arc agi 4 months later , shut up man ai isnt stopping any time soon 😂😂😂

People always say this when a new level of capability is achieved and then the exact opposite happens just take the L and realize whats going on ITS THE INTELLIGENCE AGE for a reason

thanks! big fan!!

Well, its 4 months later as i write this… GPT keeps releasing new models, no plateau at the moment

Really limited comment. Why bothered by AI hitting the wall, when it’s like 100 IQ points over yourself.

I’m not implying that’s totally good. Yet, it’s real.

Not to mixup politics. It happens that “liberals” had dragged all kind of delusional wannabes.

Moore's Law made IC designers lazy. Instead of making creative designs, IC designers just relied on newer technologies improving their performance.

Also Moore was not a dev lol he was an engineer (the hardware kind)

What a very confident statement.

I want to invite you to "attention is all you need" ai research for better transformers

Even GPUs have their limits. The further and further each core is physically from one another lowers the performance exponentially. So as far as time dependent tasks like gaming: GPU has a limit.

How do you feel about it now

I no longer believe what I said in this video. Updated take here: https://youtu.be/Kzf-tL8zyfo?si=IU-0BajX1F6FbBHz

Nah, Yann is right there, don't waste your time on LLMs. They're a party trick, a storm in a teacup. XGboost is still more valuable and useful than all the LLMs combined.

"General AI" methods have failed repeatedly. Saurwein doesn't know what he's talking about. Fast inverse square root isn't that special.

I'm pretty sure the future is not in "leaving behind LLMs", because LLMs are a big part of how human intelligence works. I suspect the future will be in (1) figuring out and applying other stuff we do with our brains – while LLMs are a big part, they're not the only part; and (2) massively scaling up, presumably by figuring out better low-level implementations than CPUs or GPUs, because the human brain is a vastly larger neural net than current LLMs.

Also, we'll have to accept that the way much of SF paints AGI is just fundamentally wrong. Instead, "real" AGI is going to be much more similar to how our minds work. It's not going to be perfectly logical or free of errors (or attachable by logic traps) for the same reasons we aren't. Similarly, it's going to fall for many of the traps human minds can fall into, such as conspiracy theories.

This makes me sad, I was under the illusion that scaling up LLMs would lead to AGI by 2029.

How can we intuitively say how far large language models can go?

Well, essentially as far as a math student in university who only lets himself be carried by the theorems and definitions. Yes, if he masters them all, he can be better than 99.9% of humans. He can be a leader for the weak. But he can never be a leader of the real professors. Why? He is on a trail, he is just a good, fast train. He isn’t a bird. He needs to go to another dimension to be able to create new theorems. He has to become independent of those, solve the task without the lecture in university by figuring out the theorems himself. This requires a quality most universities don’t directly teach: intuition for the unknown. It’s a quality almost synonymous to clairvoyance: feeling which kind of theorems are possible without having seen them.

Trains are powerful, but they follow tracks. Birds invent the sky

This aged like fine wine

Apple did NOT invent the "specialized chips" concept … the Amiga was doing it in the 80's

https://www.youtube.com/watch?v=5eqRuVp65eY AI is already hitting a line that we cannot go past. However this is apparently only for transformers.

So uh… how's this going?

aged like milk 😭✌️